Agentkube lets you configure custom API keys for various LLM providers to use your own accounts when making AI requests, giving you flexibility and control over your usage and billing.Documentation Index

Fetch the complete documentation index at: https://docs.agentkube.com/llms.txt

Use this file to discover all available pages before exploring further.

Getting Started with Custom API Keys

You can connect your own provider accounts to extend Agentkube’s capabilities beyond the included quota limits.Managing API Keys

Things to know about API Keys in Agentkube

- API keys are stored in

~/.agentkube/settings.jsonin base64 format - You can add keys through the Agentkube Desktop app or by manually editing the settings file

- Some Agentkube features require specialized models and won’t work with custom API keys

- Custom API keys only work for features that use standard models from providers

Supported Providers

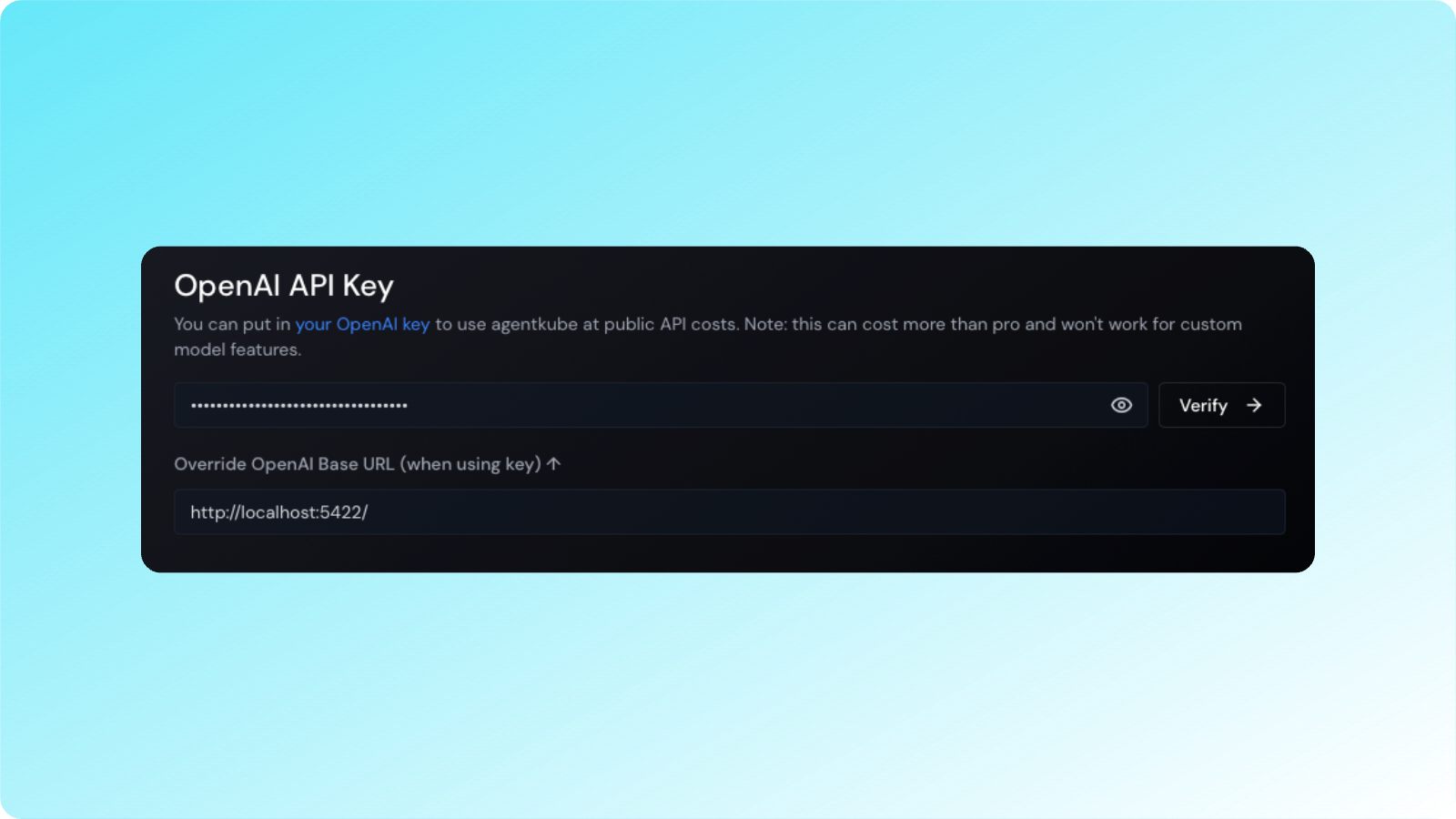

OpenAI API Keys

OpenAI’s reasoning models (o1, o1-mini, o3-mini) require special configuration and are not currently supported with custom API keys.

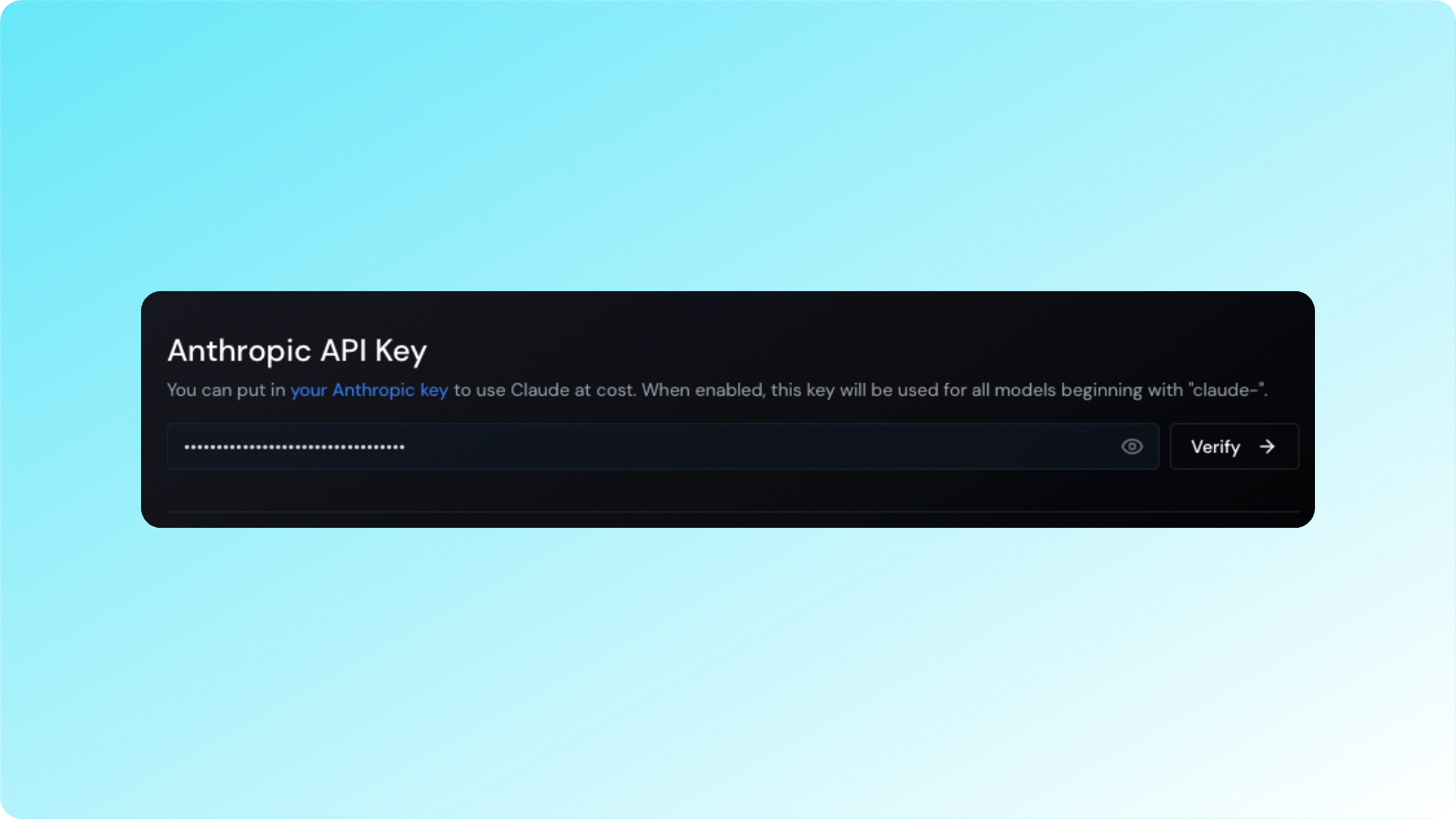

Anthropic API Keys

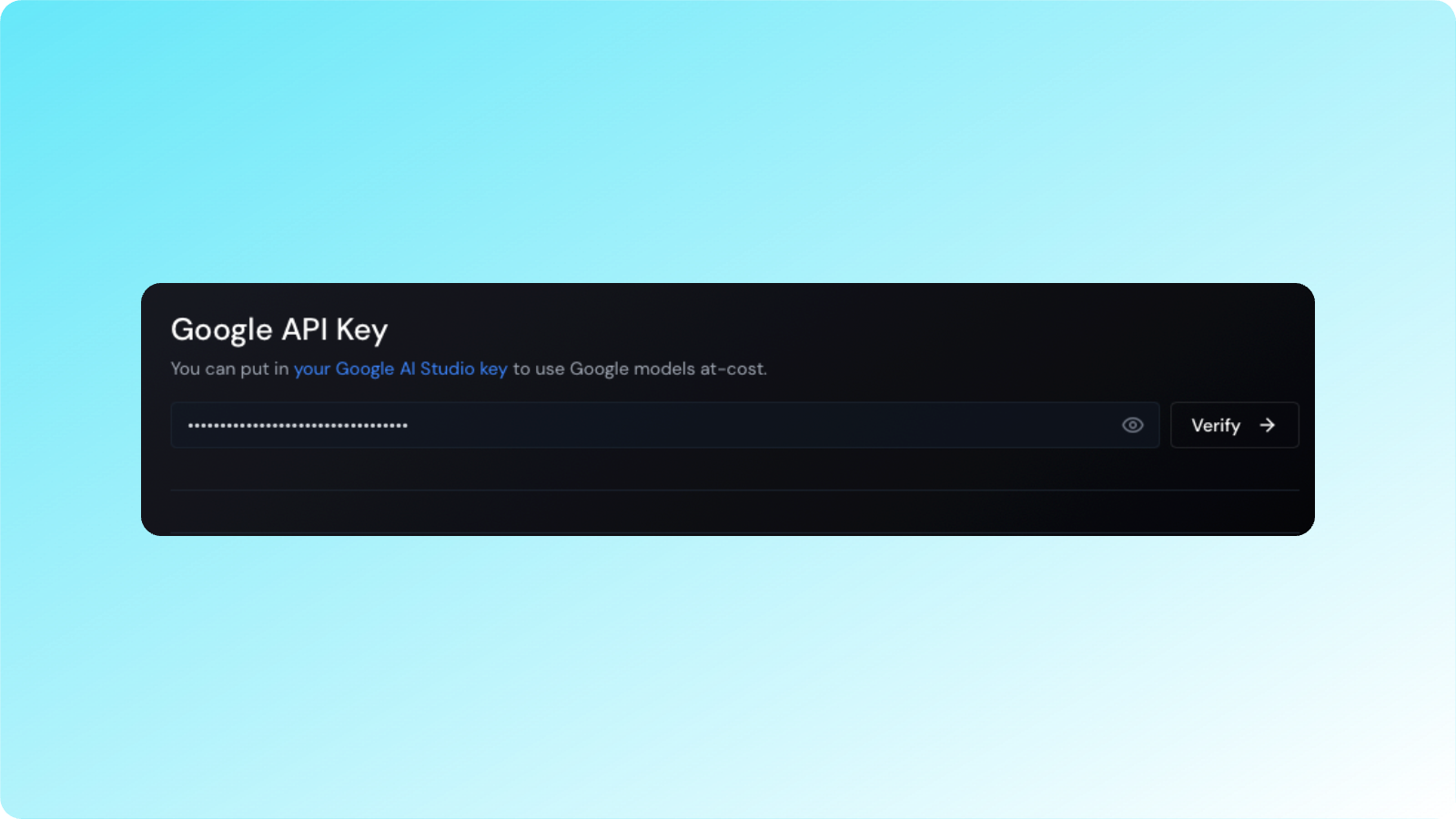

Google API Keys

gemini-1.5-flash-500k at your own cost.

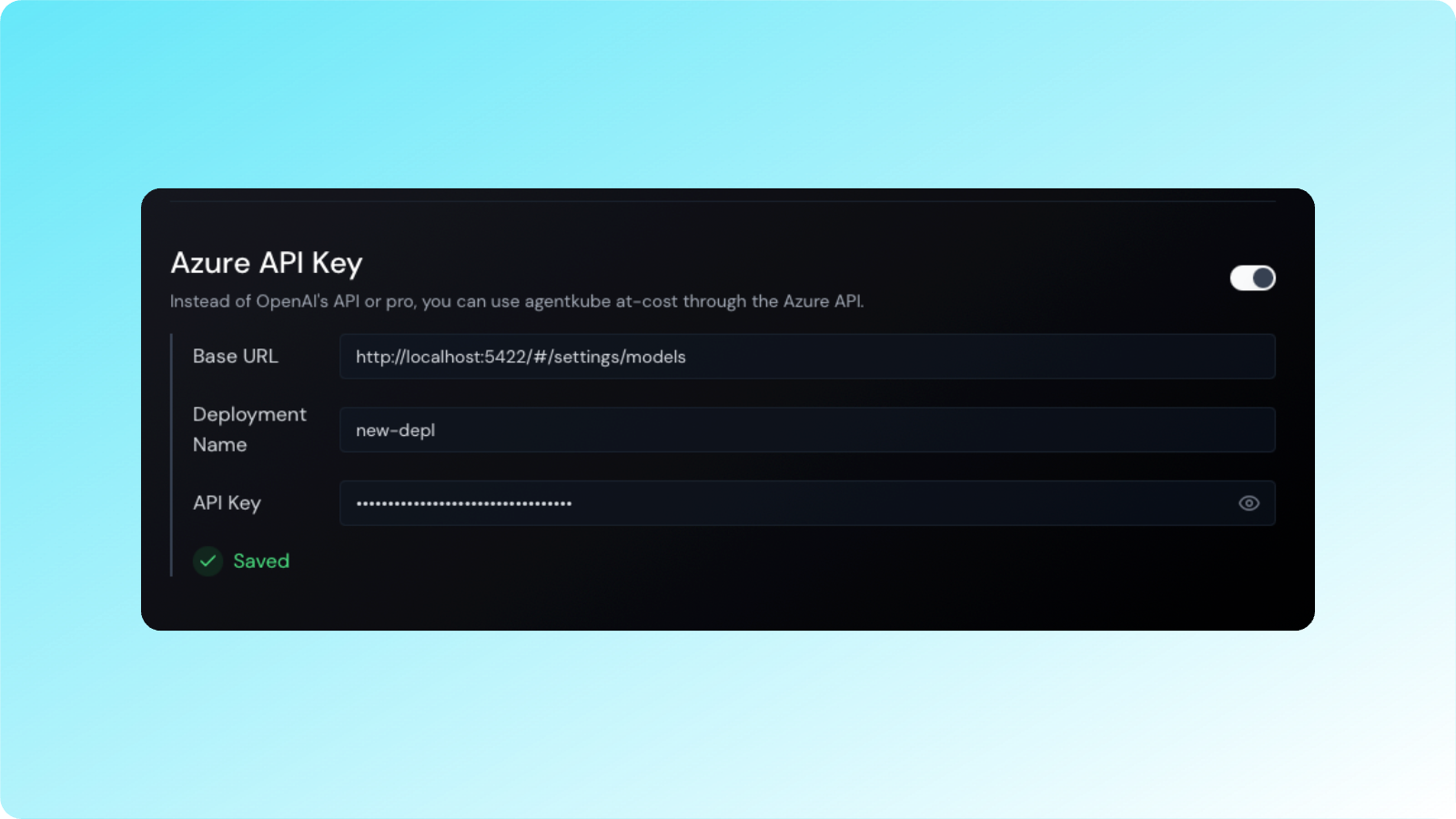

Azure Integration

AWS Bedrock

You can now connect to AWS Bedrock using access keys and secret keys, and enterprises can authenticate using IAM roles.Configuring API Keys

To use your own API key:- Go to Agentkube

Settings>Models - Enter your API keys in the appropriate fields

- Click on the “Verify” button

- Once validated, your API key will be enabled

Debugging API Key Issues

If you’re having issues with your API keys:- Verify the API key is valid and has the correct permissions

- Check that the key is for the correct environment (production vs development)

- Ensure you have sufficient credits/quota with the provider

- Review the Agentkube logs for any error messages